The Hidden Hazard: Control Room Codes and Cognitive Load at Chernobyl

When people talk about the Chernobyl disaster, they usually focus on reactor physics, design flaws, and the infamous late-night test. All of that matters. But there’s another layer that rarely gets described in plain terms: the control room didn’t just operate the reactor—it operated a language.

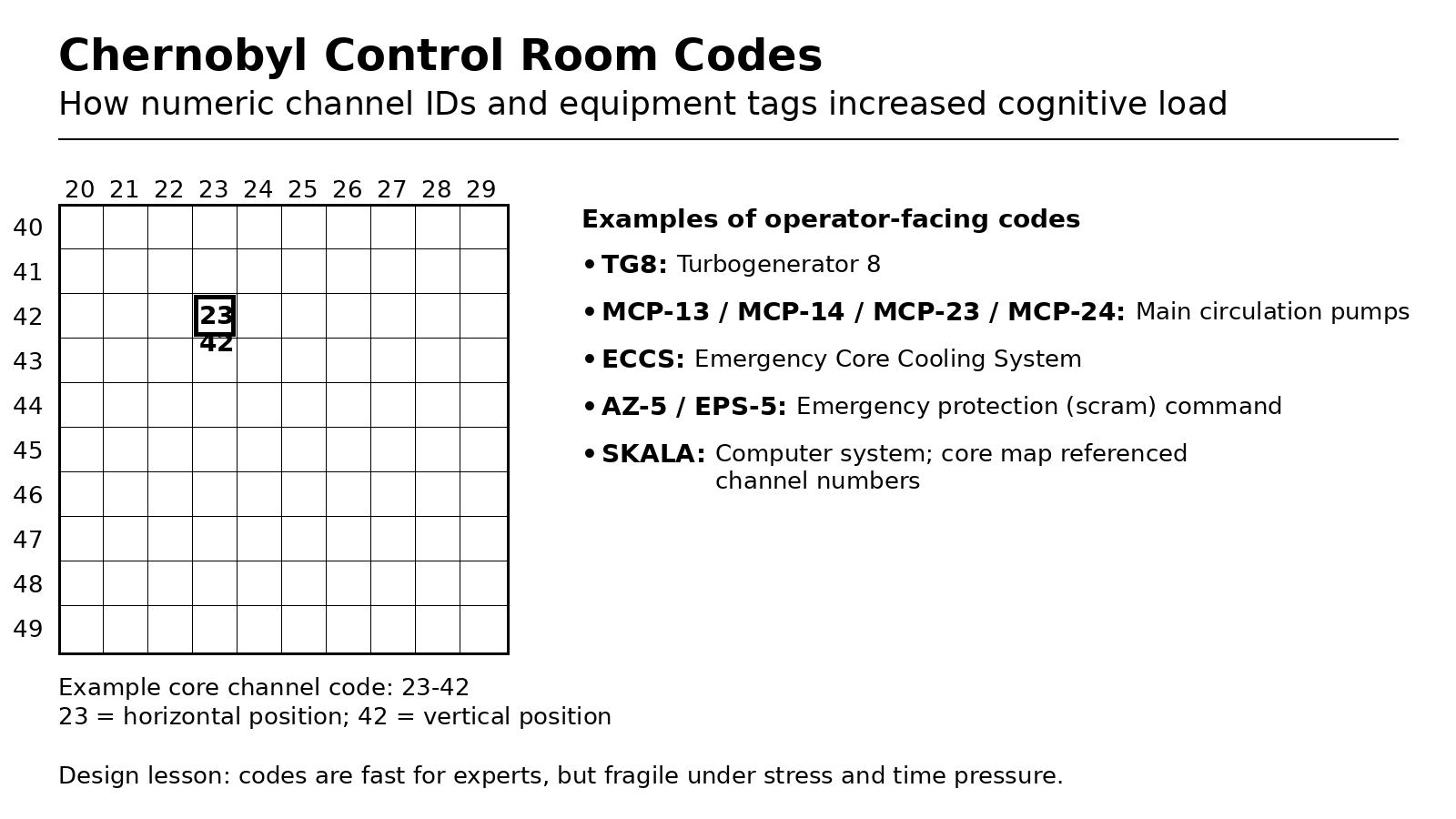

That language was made of codes: numeric core-channel identifiers, abbreviated system names, and equipment tags that were used in logs, procedures, and real-time troubleshooting. In an RBMK control room, the codes were not “optional shorthand.” They were often how the system was represented—and under stress, that can be a safety risk.

This article focuses on what we can document publicly about those codes, what they looked like, and why a code-heavy interface can become fragile when time is short and pressure is high.

What “codes” meant in an RBMK control room

In this context, “codes” aren’t secret spy ciphers. They’re the compact identifiers used to label and reference:

- Reactor core channels (a coordinate-style number that identifies a specific channel in the core)

- Major equipment (turbogenerators, pumps, systems)

- Safety functions and commands (scram/emergency protection naming)

- Computer and monitoring subsystems (systems feeding displays and calculations)

In other words: codes were the plant’s operating vocabulary.

The core map problem: alarms that pointed to numbers, not meaning

One of the clearest examples comes from the RBMK control-room “mimic” displays: large panels meant to give operators an overview of the reactor core.

Public descriptions of RBMK control rooms explain that one key display was a core map (core channel cartogram) made of tiles. Each tile corresponded to a channel in the reactor, and each tile had a channel number printed on a light cover. When a channel’s parameters were out of spec, the indicator lit.

Here’s the critical human-factors detail:

Operators had to type in the number of the affected channel(s) and then check instruments to determine which parameter was abnormal.

That means the alarm itself didn’t necessarily say “fuel channel overheating” in plain language. It often said, effectively: “Channel X has a problem.” The operator then had to translate:

- identify the lit tile,

- read the channel number,

- enter that channel number into the workflow,

- and only then interpret the underlying physics.

This is not inherently “bad”—experts can become very fast at it. But it’s also easy to see how it adds steps at exactly the wrong moment.

A real, physical example of a core-channel code: “23-42” (or “23.42”)

A particularly concrete artifact exists in the United States: ORAU’s Museum of Radiation and Radioactivity has a light cover from an RBMK control-room mnemonic display associated with Chernobyl. That cover is marked “23.42” (also written as “23-42” in ORAU’s accompanying material).

ORAU’s description (provided by Steven Wootten and additional commentary from Csaba Szabo) explains the meaning:

- 23.42 denotes a position within the core, which is split into a grid of horizontal and vertical numbers.

- The mnemonic display used different colors to mark channel types (e.g., white lamps for fuel channels, yellow for fuel channels with power density probes, green for control rod channels).

So a “code” like 23-42 isn’t a random ID. It’s a coordinate inside a grid-based representation of the reactor core.

The key safety point is not that “23-42 is confusing.” The point is that in a code-first system, the operator’s job includes constantly converting codes → meaning → action, often while multiple things are happening at once.

SKALA: when the computer becomes part of the code workflow

The RBMK core map was linked to the SKALA computer system. Public descriptions note that the core map represented information from SKALA.

SKALA itself is described as collecting data from about 4,000 sources, cycling through measurements, and calculating results—taking 10 to 15 minutes per cycle.

This matters because it shows the control room wasn’t just reading instruments. Operators were also interacting with an early computer workflow that:

- aggregated massive sensor input,

- produced calculations on a time delay,

- and required operators to use channel identifiers to retrieve specifics.

In a fast-moving abnormal event, time delays and lookup steps can increase uncertainty and workload.

Equipment tags: TG8, MCP-13… and why tags are “codes,” too

Chernobyl’s test and the broader operations of Unit 4 involved many pieces of equipment that were routinely referenced by short tags.

In Mikhail V. Malko’s technical discussion of RBMK design features and accident progression, we see examples like:

- TG7 and TG8 (turbogenerators)

- MCP-11, MCP-12, MCP-13, MCP-14, MCP-21, MCP-22, MCP-23, MCP-24 (main circulating pumps), including which pumps were running from TG8 vs. the grid.

- ECCS (emergency core cooling system) being switched off per the experiment program.

These tags are not “optional abbreviations.” They are the identifiers used to coordinate actions across a complex system—especially in procedures and logs.

And again, the human-factors question is not “are tags bad?” It’s:

- How quickly can a tired operator distinguish MCP‑13 from MCP‑12?

- How hard is it to ensure a spoken instruction points to the right component every time?

- How do you reduce the chance of confusing similar-looking identifiers under stress?

Action codes: AZ‑5 / EPS‑5 and the risk of coded commands

Even the emergency shutdown command is commonly referenced by a code name.

Malko describes that the destruction happened seconds after the operator pressed the scram button AZ‑5.

In some English-language reporting and analysis, the same action is also described as EPS‑5 (a translation/labeling variant for the emergency protection function), including references to the “EPS‑5 emergency button.”

From a safety perspective, this highlights a subtle issue: when critical actions are referenced by coded labels, clarity depends on shared understanding and consistent vocabulary—especially during handoffs, training, and cross-team communication.

Why code-heavy environments can increase risk

None of this means Chernobyl happened “because of codes.” But code density can increase the probability of human error in several ways:

1) Short-term memory has limits—especially under accident stress

OECD NEA’s discussion of human error notes that one source of error is short-term memory limitations, and that under stress, information can be forgotten as new facts and tasks are added.

Codes increase reliance on exactly that fragile mental space:

- “Which channel is lit?”

- “What system is that tag in?”

- “Was it MCP‑23 or MCP‑24 that was tied to TG8?”

- “What does this specific alarm + number combination imply?”

2) More translation steps means more chances to slip

A plain-language system can sometimes tell you “Cooling flow low in Loop A.”

A coded system might tell you “MCP‑23” and “Channel 23‑42.”

Each translation step (code → meaning → action) introduces opportunities for:

- misreading,

- mis-keying,

- selecting the wrong control,

- or delaying a decision while confirming.

3) Control-room design can induce errors

The NEA also emphasizes that control-room design should help operators avoid faulty diagnosis and that distinctive and consistent labeling helps prevent human error.

A system dominated by dense identifiers can become less “distinctive,” especially when codes are visually similar.

4) Low-power RBMK operation already increased cognitive load

Wikipedia’s RBMK overview notes that at very low power levels, automatic systems may be disabled and in-core sensors inaccessible, making control difficult and forcing operators to rely more on intuition.

That’s exactly the kind of condition where adding code translation and lookup steps can compound risk: fewer reliable signals, more mental workload.

A key lesson: modernization often removes “type the channel number”

Interestingly, even RBMK plants themselves have moved away from the “type the channel number” workflow.

Descriptions of later upgrades note that some RBMK control rooms replaced mimic displays and many chart recorders with video walls. These upgrades can eliminate the need to type channel numbers by allowing operators to point at a tile and reveal parameters directly.

That’s a direct acknowledgment of the usability problem: when the interface can surface meaning without forcing code translation, workload drops and speed improves.

Takeaways for Chernobyl (and for safety-critical design)

- Codes aren’t inherently unsafe—they’re efficient for experts and necessary in complex plants.

- But a code-first interface can become brittle under stress, fatigue, and time pressure.

- In RBMK control rooms, some alarms and overviews pointed operators first to a channel number, requiring additional steps to identify the true problem.

- Physical artifacts (like the 23.42 mnemonic panel light cover) show how deeply the core was represented through numeric identifiers.

- Modern approaches aim to reduce the number of translation steps and present meaningful context earlier in the operator workflow.